Error :- PANIC: Root volume: "aggr0" is corrupt in process

config_thread on release NetApp

Release 7.3.2 on Fri Jul 3 08:33:45 GMT 2016

version: NetApp Release 7.3.2: Thu Oct 15 04:17:39 PDT 2009

cc flags: 8O

halt after panic during system initialization

AMI BIOS8 Modular BIOS

Copyright (C) 1985-2006, American Megatrends, Inc. All

Rights Reserved

Portions Copyright (C) 2006 Network Appliance, Inc. All

Rights Reserved

BIOS Version 3.0

+++++++++++++++

Solution:- Well in this case most of us will be in dead

end or contact Netapp Technical support

But what if my support contract already ended and no more

support from NetApp L,

that is what the exact situation I had with one of my customer and I have to

deal with it and fix it.

Netapp has got some excellent features one among them is

NETBOOT , in case if you don’t know about NETBOOT a little introduction

Netboot is a

procedure that can be used as an alternative way to boot a NetApp Storage

system from a Data ONTAP software image that is stored on a HTTP or TFTP

server. Netboot is typically used to facilitate specific recovery scenarios.

Some common scenarios are; correcting a failed upgrade, repairing a failed boot

media, and booting the correct kernel for the current hardware platform.

Where we can Netboot a controller via a TFTP or HTTP server

and then perform the repair of the root volume using WAFL_IRON & WAFL_CHECK

Procedure:-

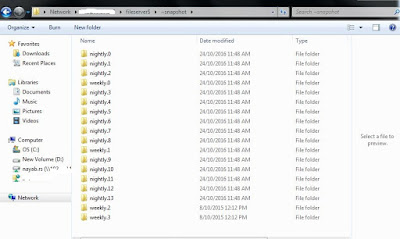

Setup TFTP server on the partner node

Netboot the node with the corrupted /vol/vol0.

Now run WAFL_check or wafliron on the aggregate that is

corrupted (mostly likely will show aggr inconsistant). Try WAFL_check first as

it will run faster if that doesn't work then try wafliron.

Wafl does checksum on top of software RAID.

the command output looks like below...

*** This system has failed.

Any adapters shown below are those of the live partner,

toaster1

Aggregate aggr1 (restricted, raid_dp, wafl inconsistent)

(block checksums)

Plex /aggr1/plex0

(online, normal, active)

RAID group

/aggr1/plex0/rg0 (normal)

RAID Disk

Device HA SHELF BAY CHAN Pool Type RPM

Used (MB/blks) Phys (MB/blks)

---------

------ -------------

---- ---- ---- ----- --------------

--------------

data ntcsan6:19.126L0 0e -

- - LUN

N/A 432876/886530048 437248/895485360

data ntcsan5:18.126L2 0a

- - -

LUN N/A 432876/886530048 437248/895485360

data ntcsan5:18.126L1 0a

- - -

LUN N/A 432876/886530048 437248/895485360

data ntcsan5:18.126L6 0a

- - -

LUN N/A 415681/851314688 419880/859914720

data ntcsan5:18.126L5 0a

- - -

LUN N/A 415681/851314688 419880/859914720

data

ntcsan6:19.126L8 0e -

- - LUN

N/A 415681/851314688 419880/859914720

data ntcsan6:19.126L7 0e

- - -

LUN N/A 415681/851314688 419880/859914720

data ntcsan5:18.126L10 0a

- - -

LUN N/A 415681/851314688 419880/859914720

RAID group

/aggr1/plex0/rg1 (normal)

RAID Disk

Device HA SHELF BAY CHAN Pool Type RPM

Used (MB/blks) Phys (MB/blks)

---------

------ ------------- ---- ---- ---- -----

-------------- --------------

data ntcsan6:19.126L12 0e

- - -

LUN N/A 367837/753330176 371553/760940880

data ntcsan5:18.126L13 0a

- - -

LUN N/A 367837/753330176 371553/760940880

data ntcsan6:18.126L6 0e

- - -

LUN N/A 415681/851314688 419880/859914720

data ntcsan6:18.126L10 0e -

- - LUN

N/A 411063/841857024 415215/850362240

data ntcsan6:18.126L13 0e

- - -

LUN N/A 422730/865751040 427000/874497120

Wait until it finishes as it may take hours based on the size

of aggregate.